There are some explanations on the net on how GEF works (see here ) but I have not found a good description on how GEF really works, so I will try to unravel the secret and post it here for the world to know. My investigation began when I found that although GEF EditPolicy instances are installed in the EditPart using a key, this key was never used (well, everything works even if I changed all the keys to “chukumuku”). I started reading more and mode code and was fascinated about how the framework works. So here it goes, what I have found in some nights of code reading and debugging.

We will start by explaining what happens when we select a tool and move the mouse over the editor. The editor itself is implemented on top of SWT (The Standard Widget Toolkit), which is like java’s AWT (Abstract Window Toolkit). My knowledge of SWT is pretty low so it won’t be explained here. The only important thing to know is that a GEF editor’s graphics are drawn on an SWT Canvas on which GEF draws the RootFigure which is used as the top level figure on which the editor is drawn. The Canvas is par of a LightweightSystem class who forwards user interaction from SWT to GEF. There is also all the drawing functionality of draw2d that is transferred in the other direction but this will not be discussed here.

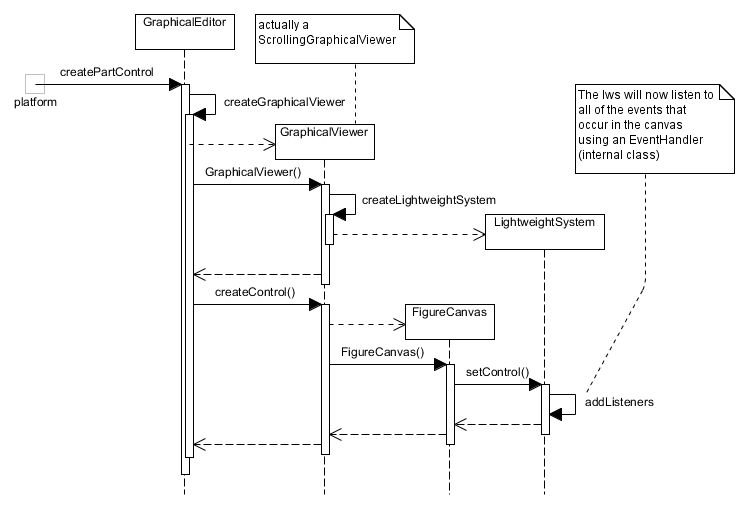

Looking at this from the other side, when a GEF editor is started, the platform calls createPartControl on the GraphicalEditorClass. This function in turn calls createGraphicalViewer which instantiates a new LightweigthSystem and a Canvas on which to draw the EditPart instances. The process is better described in the following sequence diagram:

So knowing this we now go into what happens when the user interacts with the editor, using the SelectionTool for our example. As explained above, the LightweightSystem bridges the requests coming from the SWT Canvas, so when the user clicks on the editor canvas, the request that is fired by it is received by the LightweightSystem‘s EventHandler. The EventHandler is an adapter to a EventDispatcher which does the real event handling. In our case this is a DomainEventDispatcher which has a handle to the EditDomain containing our editor’s information.

Event handling is done in two parts: direct interaction and indirect interaction (my naming).

- Direct Interaction: while we are using GEF for editor interaction, draw2d also allows us to add mouse listeners that can interact with the figures in the diagram. In this part, the dispatcher searched for draw2d listeners in the figures below the mouse click, and if it finds one it calls the listener with the mouse event. If there is more than one listener in the Figure hierarchy, the topmost listener (z-axis) is called.

- Indirect Interaction: the editor directly handles the mouse event by forwarding the event to the currently active tool, in this case the

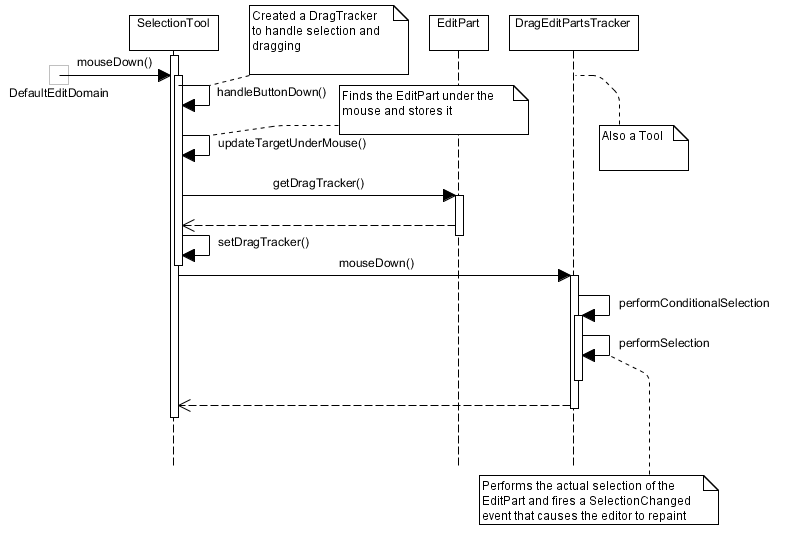

SelectionTool. The selection tool is actually a complex example since it does not work directly but delegates to aDragEditPartsTrackerwhich does the selection. This is done because we can drag the selection tool to do multiple selection. In any case, the selection tool forwards the request to theDragEditPartsTrackerwhich finds whatEditPartinstances are now selected in the editor, updates the domain’s internal data structures and fires a selection changed event, which updates the graphics on the screen to match the requested selection.

I tried to catch this interaction in the following two sequence diagrams: First the generic part before the SelectionTool is invoked,

And second, the selection tool is invoked with the mouse event.

I hope that this explanation has shed a bit of light on how GEF works. For me it has been a very hard task understanding what goes on under the hood, and there are still many things that need to be cleared out. I think that in the next post I’ll show how the creation tool works, which I think is simpler and in retrospect I should have analyzed first. So see you next time.